Creating a website that is both functional and aesthetic is not an easy task. In this article, we will guide you through the process of preparing a site outline step by step.

Performance optimization is an important and often forgotten step when creating even smallest sites that are expected to convert.

This is the fourth part of the web development overview series. In the previous parts, we looked at content, design/UX, backend (part 1), building apps from scratch and frontend development (part 2), and testing and hosting (part 3). In this installment, we will cover what is (or at least should be) done in order to ensure the best experience for your users – performance optimization.

Performance optimization metrics

If you are thinking about performance optimization of something, be it a process, program, system or operation, it’s best to start with deciding on what metrics are key and should be focused on when optimizing. Since what’s important varies greatly from project to project, it’s hard to provide a complete list. But for starters, you should at least consider:

Load time of the page

Load time of the page

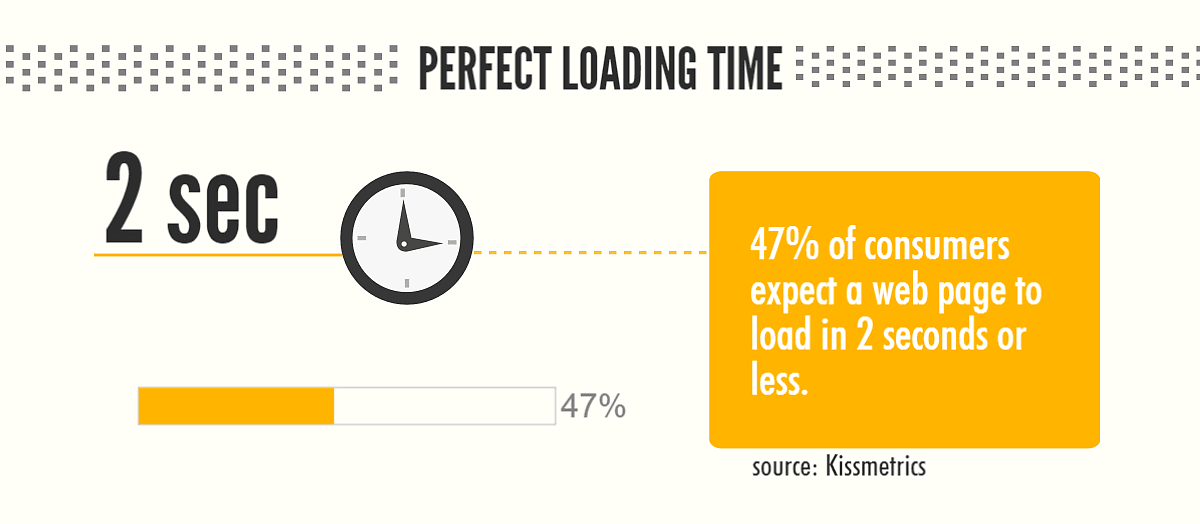

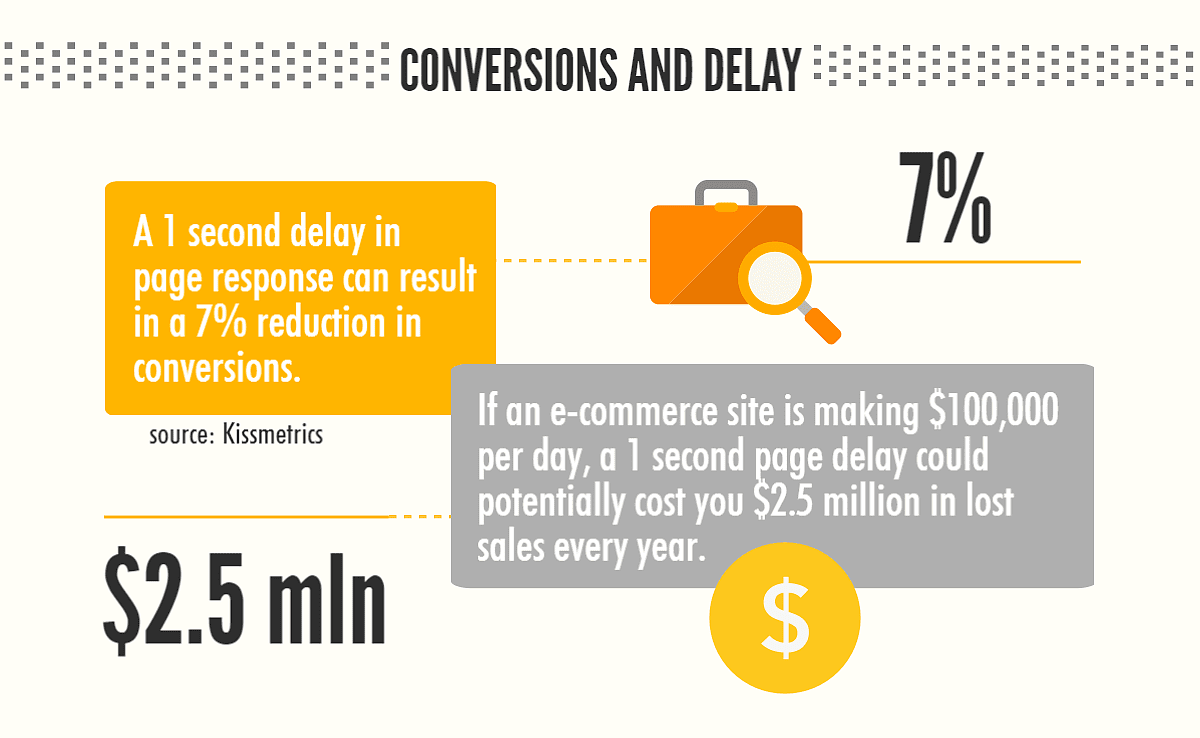

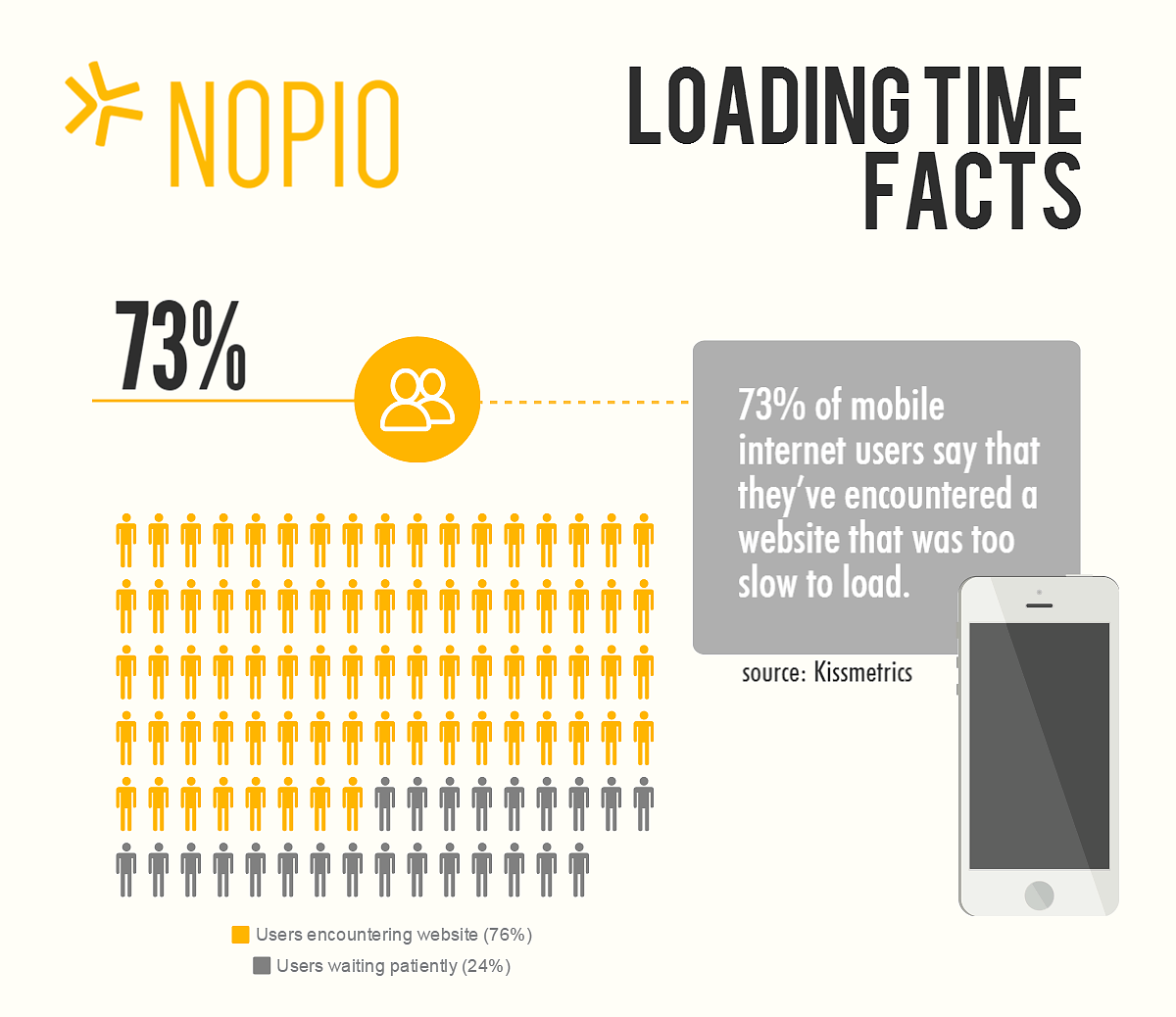

There are some things that should not be ignored and most other technical metrics are aimed at augmenting this one. Study after study (eg. by Google or by Kissmetrics) shows that ensuring a shorter load time is one of the most important performance optimizations you can do. Google also includes page load time in the ranking calculations. While it may not be as important as relevancy of the content, it is still a factor. So what can be done to apply performance optimization to page load times?

Time to first byte

Put simply, time to first byte is how much time passes between when a user initiates the request for given page and when the browser receives the first byte of information. Going slightly deeper into technicalities, this time is spent on:

- translating domain to server’s IP address (DNS query)

- sending request to server’s IP

- server processing request

- server sending response to browser

This, however, is not measured until the end user sees anything in the browser window – it’s measured on the server and network side of things. It’s also important when the site is optimized for number of requests processed per second.

There are many things that can be used as the performance optimization technique for this factor. The site owner should focus on making sure the hosting servers have enough power to process any request; the DB queries should be optimized so as not to slow down the page processing.

Size of each loaded page

Basically, the more data (in bytes) the browser needs to download to present content, the longer it will take. This is especially important for mobile traffic that doesn’t always use a lightning-fast broadband connection. One year ago, the average page size was about 2MB – and we can assume it will be growing. Take a look how long it takes to download a page of this size on various connection speeds:

| Connection type/speed | Page size: 2MB | Page size: 5MB |

| GPRS / 171kbps | ~1 minute 30 seconds | ~5 minutes |

| EDGE / 384kbps | ~50 seconds | ~2 minutes 30 seconds |

| 3G / 2 Mbps (average) | ~8 seconds | ~20 seconds |

| 4G / 10Mbps (average) | ~1 second | ~4 seconds |

As you can see, it’s super important to keep the size of the page as small as possible – not all your users have 3G or 4G connectivity. Anything below that and, even for the average size, the time goes up significantly. On top of that, keep in mind that load times over 4 seconds are considered very harmful.

As mentioned in the study from the link above, the main bulk of today’s pages are images. Fortunately, there are a myriad of services that you can use to optimize image size without compromising quality. Two examples are TinyPNG and Kraken.io; we use both and are happy with the results. There are many others out there, but remember to always optimize your images.

In addition to optimizing images, it’s a good idea to have compression enabled for HTML code, JavaScript, and CSS stylesheets.You should also utilize the browser cache on all static assets.That will limit number of items the browser needs to request from the servers on subsequent requests. For example, going between pages will not require re-downloading shared components like JavaScripts and CSS stylesheets.

Number of requests required to load page

This is important when it comes to performance optimization because the browsers have limits on parallel downloads when loading the page. Imagine a situation where a browser can download only 4 items in the same time and each can be obtained quite fast, let’s say in about 200ms. It looks OK, but it isn’t if your website requires 80 items (images, javascripts and stylesheets) to load. It will start downloading 4 items and hold off on the others until these are done. It’s 20 cycles of roughly 200ms, and that’s 4 seconds already.

In order to avoid these kinds of issues, you can use an approach where all Javascripts get combined into a single file, and CSS stylesheets are undergoing the same treatment. Both these resources should also be minified to limit the size of files requested.

Use Content Delivery Network (CDN) for static assets

This gets overlooked by many site owners, but can have a great impact on general site behaviour. You want to make sure that the rarely changing assets – images, JavaScripts, and CSS – are delivered to the browser as effectively as possible.

In the usual set-up, the site is served by one or many servers that reside in a certain location on the planet – for example in the Amazon’s data center on the East Coast. In this case, all requests are being passed over to the server which then needs to deliver content that is dynamically changing between requests, as well as static assets that are identical every single time they are requested. At this point, another enemy of website performance optimization enters – the distance between server and the end user.

In order to save our precious load time we want to make sure the static resources are loading for the user as fast as possible. This can be achieved by shortening the distance. While you may think it best to just run a copy of the whole website on multiple servers spread around the world, this however adds a lot of complications and is not very cost effective.

What is usually done is the static assets are served not from the server itself but from the Content Delivery Network (CDN) (here you can see the list of best CDN companies). It’s a cloud of servers spread around the world that specialize in one thing only – delivering static assets as fast as technically possible. The goal is that the only limitation in the assets load is the end user’s connection speed.

Number of simultaneous requests handled by the server

This factor is a little less considered, as most small sites rarely hit this limit. The problem is that if there’s a traffic spike and suddenly the limit is reached, it usually brings the site down (rendering it unreachable for most users). This happens because the web server is set to handle a number of simultaneously-given requests. It’s a number that changes on a case by case basis; it depends on the number of processors and amount of memory on the server, plus how much memory each application request needs.

If the limit is reached, what happens when the server receives more requests per time unit than it can serve in that time? The overflow sits in a queue, waiting to be processed. Then, usually after 60 seconds, the request times out and the user needs to refresh the page to try again – increasing the load even more.

There are many things that can be done to avoid this issue. The best general advice is to test the limit in advance by performing load tests using tools like Apica’s Load Testing Tool, loadimpact.com, BeesWithMachineGuns, or good old siege command. Knowing the limit, the site owner and maintainers should work on predicting why a big traffic spike may occur meaning the limit is reached. If there’s a chance that advertising will increase the traffic in such a way, the infrastructure should be ready for it in advance, by creating more servers or upgrading the existing ones.

Is there something I forgot to mention? Let me know by getting in touch here. Leave a comment below or tweet us at @nopio_studio

If you like this series, see also the next parts:

- website caching (Part 5),

- ssl certificates (Part 6)

- code deployment (Part 7)

- WordPress performance plugin to speed up a site

Load time of the page

Load time of the page